Configuring AI services

Prerequisites

Please meet the following conditions before configure AI Services:

-

You must be using DCT Enterprise and have administrator permissions.

-

Your system must meet the memory and CPU requirements for DCT.

-

You must access the Delphix Download Center to retrieve the model file.

The DCT synthetic data list generation feature runs using AI resources deployed within the DCT cluster. No requests are made over the network to external AI services. Model uploads and usage should follow your organization's internal access control and audit practices.

Enabling AI Services

Appliance

In the appliance deployment of DCT, the AI Services containers (ai-control and ai-execution) run by default if the VM has at least 16 GB of RAM.

Kubernetes

-

Open values.yaml. At the end of the file, review the documented configuration options for the AI service pods.

-

At minimum, uncomment the following lines in values.yaml to enable AI startup and feature flagging:

CopyaiRegisterAtStartup: true

enabledFeatureFlags: AI_REGISTER_AT_STARTUP -

AI Services will create two new volumes to store models and other temporary files. These volumes are created when the feature flag is enabled in the previous step. To override the default size setting for these volumes, the following configs in values.yaml may be updated.

CopyaiExecutionDataStorageSize: 10Gi

aiControlDataStorageSize: 10Gi -

Due to the large size of AI model files, you must increase the maximum body size for requests in ingress. A new ingress must be created with the content below.

-

For other ingress controllers, configure the maximum body size according to the controller’s documentation. Without this update, it will not be possible to upload model files.

CopyapiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: model-upload-ingress

namespace: dct-services

annotations:

nginx.ingress.kubernetes.io/backend-protocol: "HTTPS"

nginx.ingress.kubernetes.io/proxy-body-size: 5g

spec:

rules:

- http:

paths:

- path: /dct/v3/ai/management/model-upload

pathType: Prefix

backend:

service:

name: proxy

port:

number: 443 -

-

Apply the changes:

-

Replace <release-name>, <chart-path>, and <namespace> with your values. These properties start AI Services in the DCT cluster.

Copyhelm upgrade <release-name> <chart-path> -n <namespace> -

Configuring ingress for AI model uploads (required for uploads up to 5 GB)

To support AI model uploads up to 5 GB, you must configure a dedicated ingress for the AI control service.

-

Create a file named ai-model-upload-ingress.yaml with the content below:

CopyapiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: ai-model-upload-ingress

namespace: dct-services

annotations:

nginx.ingress.kubernetes.io/backend-protocol: "HTTPS"

nginx.ingress.kubernetes.io/proxy-body-size: 5g

spec:

rules:

- http:

paths:

- path: /dct/v3/ai/management/model/upload

pathType: Prefix

backend:

service:

name: proxy

port:

number: 443 -

Run the following command:

Copykubectl apply -f ai-model-upload-ingress.yaml

Setting up AI Services

-

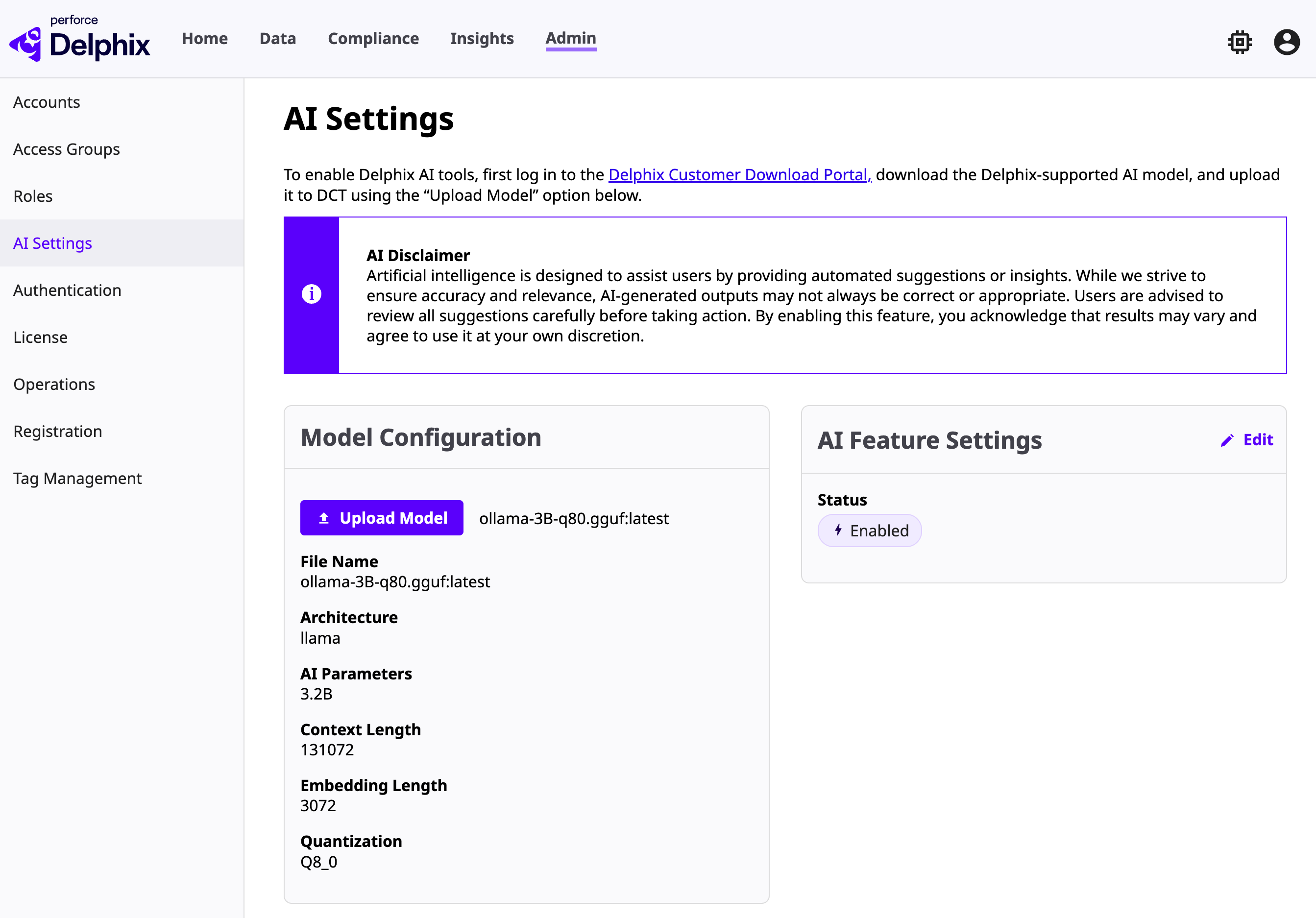

Log into DCT and navigate to Admin Settings > AI Services.

-

Toggle the feature switch to enable AI Services. This step only enables the service—it does not install the model.

-

Go to the Delphix Download Center, locate the supported model file, and download it to a secure local machine.

-

The file is approximately 3.2 GB and should be handled in accordance with your organization’s file management policies.

-

-

Return to Admin Settings > AI Services in the DCT interface.

-

Use the Upload Model option to select the file you downloaded.

-

Once uploaded, the model becomes active.

-

Uploading a new model will overwrite any existing one.

-

-

After uploading, verify that the model has been correctly installed by looking for details like model family, name, a context window, and more.

-

The system will confirm successful upload, and you can test the installation by initiating a synthetic data generation task.

Updating the model

When new models are released, download the latest version from the Download Center and follow the same upload process. The new model (when uploaded) will automatically replace the old one.

Troubleshooting

If you encounter issues:

-

Confirm the system has adequate memory and disk space.

-

Review the DCT logs and Admin > Operations for additional context.

-

Look for operations of type LLM Upload Model to determine if the operation successfully completed. If not, an error will be there to explain why it failed.

-

-

Verify that the correct model version was downloaded.

-

For Kubernetes DCT deployments only, check connectivity between the AI execution and control containers.

-

Confirm that the ai-control and ai-execution service pods are running and healthy.

-

App/Gateway container

Check the gateway logs to confirm that the AI route was added successfully:

2025-07-13T17:52:55.316Z INFO [gateway, ] 1 --- [gateway] [taskScheduler-1] [ ] c.d.api.gw.route.GatewayRouteConfig : Successfully fetched routing info for AI App.

Confirm AI Services are running

Once DCT AI services are started, there should be two new services in the cluster:

-

ai-control: Control plane container -

ai-execution: Execution plane container

Control plane

Logs confirming startup and execution service registration may look like this:

2025-07-18T16:32:16.672Z INFO [ai-control, ] ... : Started DCTAiApplication in 35.698 seconds (process running for 51.131)

2025-07-18T16:32:23.901Z INFO [ai-control, ] ... : Initializing Spring DispatcherServlet 'dispatcherServlet'

2025-07-18T16:32:24.067Z INFO [ai-control, ] ... : New status for LLM gateway container ai-execution-b5d959584-ml5dq is RUNNING

Execution plane

Example log activity:

Sending status update: RUNNING to http://ai-control:5009/dct-internal/update-llm-gateway-status

retry_until_success is true, will attempt up to 1000 times

Attempt 1 of 1000

HTTP error: 000 ... will retry

Attempt 3 of 1000

Status update sent successfully: 200

Confirm persistent storage

Appliance

Use Docker to list containers and mounted volumes with:

docker ps -a --format "table {{.Names}}\t{{.Mounts}}"Expected entries include dct-ai-execution-1 and dct-ai-control-1 with mounted storage paths.

Kubernetes

Check persistent volumes in the namespace with:

kubectl get pv -n dct-servicesLook for claims such as dct-services/ai-control-data and dct-services/ai-execution-data.

Check container logs

If services fail to start, review logs for potential issues such as:

-

Insufficient memory

-

Insufficient CPU

-

Persistent storage errors

-

Access permission problems

Use the following commands to review logs:

Kubernetes

kubectl logs <pod-name> -n dct-servicesAppliance

docker logs <container-id>